About Us

We Test Customer Experience Before Your Customers Do

TrueCX uses AI customers to validate conversational AI, confirm agent readiness, and benchmark competitive performance.

Why TrueCX?

Customer experience runs on conversations—human and AI. But no one can measure the full picture.

We built TrueCX so enterprises can validate agent readiness before live calls, certify AI systems before deployment, and benchmark competitive performance using real interactions.

What is TrueCX

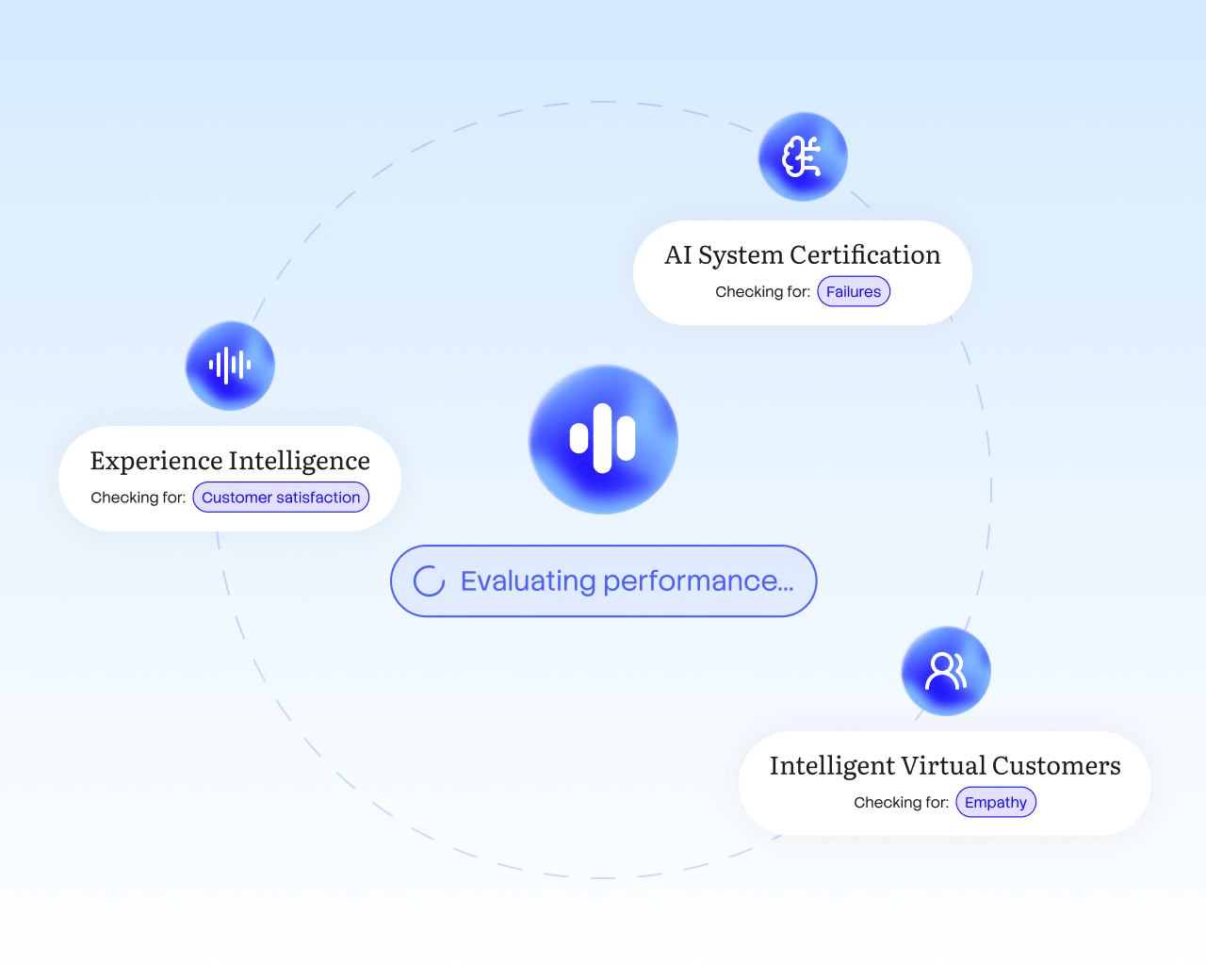

TrueCX uses Intelligent Virtual Customers (IVCs)—AI that interacts with your systems like real customers do—to test conversational performance in three ways:

-

Train human agents

With realistic practice before live calls.

-

Certify AI systems

With independent testing before and after deployment.

-

Benchmark competitors

Using standardized interactions across your market.

How TrueCX started

TrueCX CEO and Founder Lonnie Johnston spent 20+ years in customer service technology. Watching new, competent agents struggle made the problem clear to him: agents need practice that feels real long before the stakes are real.

He founded TrueCX in 2024 to address this gap. What started as agent training evolved: the same virtual customers that prepare agents now test AI systems and benchmark competitors.

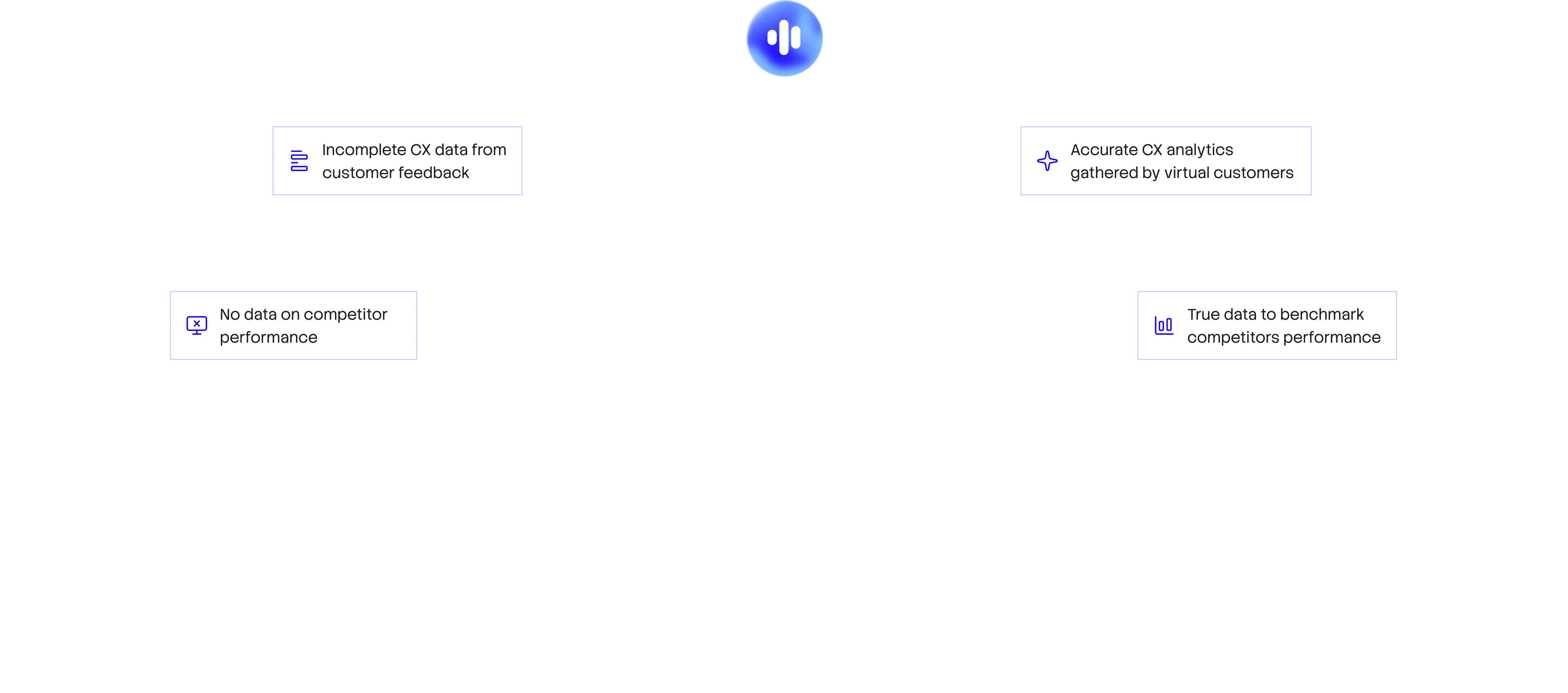

Unqualified agents

Prepared agents

Leadership team

Lonnie Johnston

CEO & Founder

20+ years in customer service technology with an MBA from Rockhurst University. Previously built global success organizations at NICE and scaled revenue at Balto.

Ed Kogan

CTO

Engineering leader with 20+ years of experience scaling teams. A hands-on architect with a track record of delivering AI-powered products at high-growth companies.

Maria Edington

VP of Marketing

B2B SaaS operator with experience building and scaling marketing and operations teams through venture-backed growth.

Bryan Morton

VP of Sales

Sales leader with 25+ years of experience building revenue, leading teams, and closing enterprise at the intersection of strategy, technology, and execution.

How we work

AI-passionate, practically minded

We're builders who believe AI should solve real problems, not create impressive demos. Everyone here is obsessed with what AI can do—but only if it actually works when customers use it.

We measure ourselves the way we measure you

High accountability isn't just culture—it's how we operate. If we're asking enterprises to validate performance before deployment, we hold ourselves to the same standard. We test, iterate, and provide what works. No shortcuts.

Customers shouldn't bear the burden

The gap between what enterprises deploy and what actually works shouldn't be discovered by customers. Whether it's unprepared agents, untested AI, or broken handoffs—we exist to catch failures before real people experience them.